On Tue, Apr 30, 2019 at 9:27 PM Michael Dickens <address@hidden> wrote:

Hi Moses - You're doing some very interesting work here! I think you're more likely to get some assistance if you can provide a repo/archive that we can download / clone, then do the usual to configure, build & do testing on to verify your issue. Once verified, there are a variety of ways to try to track down memory issues && the GNU Radio community has many talented programmers / hackers who could help do so. You did a great job writing up what you're doing & the issue, but the barrier to entry / testing / debugging is still a little high. Hope this is useful! - MLDOn Mon, Apr 29, 2019, at 3:32 PM, Moses Browne Mwakyanjala wrote:Hello everyone,I have finished writing a C++ LDPC decoder for the standard CCSDS C2 (8160,7136) LDPC code. In order to avoid memory allocation issues, I have decided to use std::vector<> vectors throughout the program, as opposed to the c-style mallocs. I am able to run the program and simulate the BER on my laptop (8 Gig ram) without any problems. The simulation is able to run for hours without any issues. However, the code experiences severe memory leaks when imported to GNU Radio.The LDPC class files [ldpc.cc and ldpc.h] as well as the GNU Radio wrapper files [decodeLDPC_impl.h and decodeLDPC_impl.cc] are attached to this email. The wrapper class constructor initializes the LDPC decoder variable by specifying an "alist" file. It also initializes the message handler function called "decode" which use the LDPC codec to carry out LDPC decoding.//Constructorset_msg_handler(d_in_port, boost::bind(&decodeLDPC_impl::decode, this ,_1) );ccsdsLDPC = new ldpc(d_file);The decode message handler receives soft bits from the recoverCADUSoft block, which are float values as shown in the code below. The soft bits are decoded by a wrapper function "decodeLDPC::decode" which use the function ldpc->decode(softbits,iterations,sigma). Iterative decoding requires a number of iterations to successful converge. I use 20 iterations.//Decode message handlervoiddecodeLDPC_impl::decode(pmt::pmt_t msg){pmt::pmt_t meta(pmt::car(msg));pmt::pmt_t bits(pmt::cdr(msg));std::vector<float> softBits = pmt::f32vector_elements(bits);// LDPC Decodingstd::vector<unsigned char> decodedBits = (*this.*ldpcDecode)(softBits);if(d_pack){uint8_t *decoded_u8 = (uint8_t *)malloc(sizeof(uint8_t)*decodedBits.size()/8);for(int i=0; i<decodedBits.size()/8; i++){decoded_u8[i] = 0;for(int j=0; j<8; j++){decoded_u8[i] |= decodedBits[i*8+j]?(0x80>>j):0;}}// Publishing datapmt::pmt_t pdu(pmt::cons(pmt::PMT_NIL,pmt::make_blob(decoded_u8,decodedBits.size()/8)));message_port_pub(d_out_port, pdu);free(decoded_u8);}else{// Publishing datapmt::pmt_t pdu(pmt::cons(pmt::PMT_NIL,pmt::make_blob(decodedBits.data(),decodedBits.size())));message_port_pub(d_out_port, pdu);}decodedBits.clear();softBits.clear();}My question is what could possibly be the cause of the memory leak I experience? There are no memory leaks when the class is used outside GNU Radio. To add more confusion, I experienced the same situation when I was working with another iterative decoder for Turbo code (only 10 iterations). The code was able to run smoothly in a C++ application but experienced memory leaks in GNU Radio.I also have one question regarding buffering in GNU Radio. Since iterative decoding with a large number of iterations and large block sizes takes time to complete, the input pmt data that is not consumed immediately will have to be stored somewhere. Is that the case? Could that be the reason for the memory leak?Regards,Moses._______________________________________________Discuss-gnuradio mailing listAttachments:

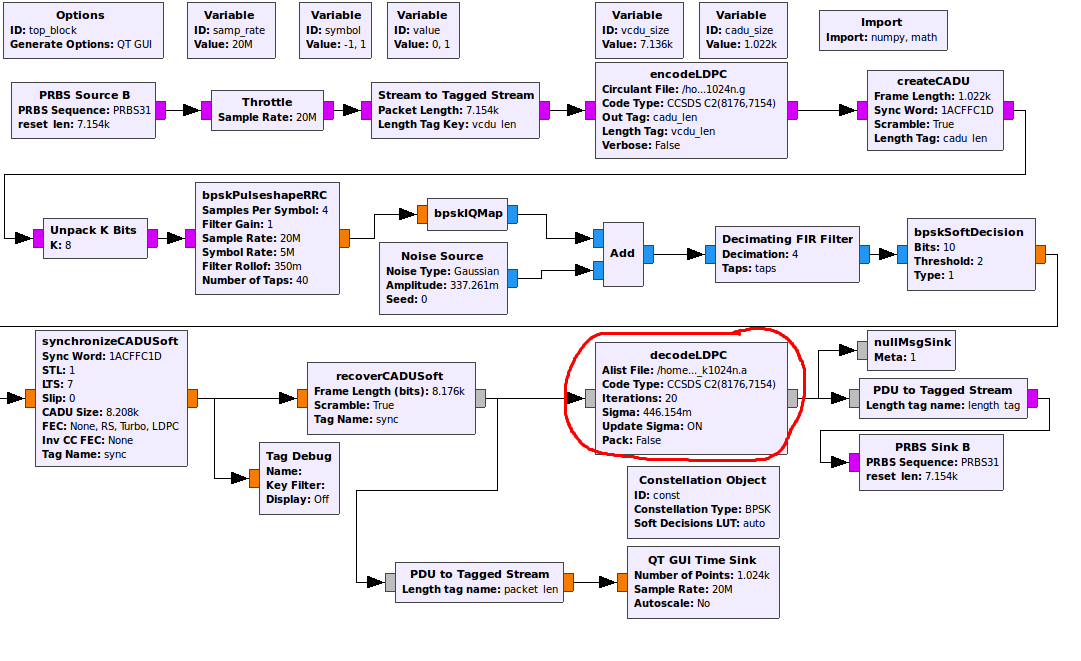

- image.png

- decodeLDPC_impl.cc

- decodeLDPC_impl.h

- ldpc.h

- ldpc.cpp